Introduction to the TeamDrive Hosting Service¶

TeamDrive Hosting Service Overview¶

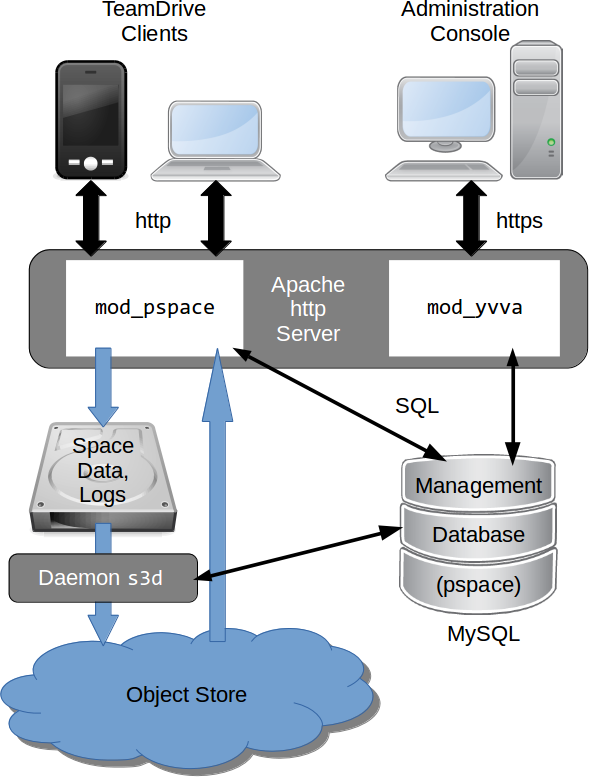

The TeamDrive Hosting Service consists of a number of components which are illustrated below:

TeamDrive Hosting Service Overview

The TeamDrive Apache module mod_pspace handles the communication and

exchange of data with the TeamDrive Clients. In the default configuration,

Space data is stored on a regular file system or an NFSv4 share.

The TeamDrive Hosting Service Administration Console and TeamDrive Hosting

Service API is served by the Yvva Apache module mod_yvva.

The list of Spaces, access data, usage statistics and other administrative

information is stored in the Management MySQL Database called pspace.

Additionally, an Amazon S3/Azure BLOB Storage/Ceph Object Storage-compatible

object store can be used as second tier storage. This significantly reduces

the load on the first tier storage with regards to disk space utilization

and I/O. In this case, only data “in flight” like the files being uploaded

by the TeamDrive Clients and the Space log files are stored temporarily on

the first tier storage until the upload completed. Only the so-called

last.log files reside permanently on the first tier storage in this

configuration.

TeamDrive Hosting Service using an object store

Afterwards, the files are moved to the object store asynchronously, using the

TeamDrive Daemon s3d. Once they have been transferred to the object

store, mod_pspace fetches the objects in question from there before

serving them to the Clients, thus acting as a proxy.

Alternatively, the Hosting Service can be configured in such a way that

Clients requesting these objects will receive a redirect to the object store

by mod_pspace for obtaining them directly. This helps to offload network

traffic from the Host Server to the object store.

See the chapter Setting up an Object Store in the TeamDrive Hosting Service Administration Guide for details.

A storage system combined with the associated web servers is called a TeamDrive Hosting Service. Externally, i.e. from the Registration Server or user’s perspective, the Hosting Service is referred to as a TeamDrive Host Server. However, in this documentation references to TeamDrive Host Server refer to single host instance running an Apache web server and the TeamDrive Hosting Service software.

TeamDrive Scalable Hosting Storage (TSHS)

The illustration above shows a “scaled-out” solution, with several Apache Webservers attached to a TeamDrive Scalable Hosting Storage (TSHS) cluster. See the chapter TeamDrive Scalable Hosting Storage in the TeamDrive Hosting Service Administration Guide for details.

As an alternative to TSHS, a shared file system like NFSv4 or a distributed file system can also be used to store the data.

TeamDrive Hosting Basics¶

When using file system based storage, the data is stored on one or multiple volumes. When using the TSHS cluster for storage, the volume component is ignored. When using a file system, Spaces may be created on any volume that is “operational”.

A TeamDrive Hosting Service requires a unique domain name. The domain

name becomes part of the Space URL that is returned to the TeamDrive Client

when a Space is created on the service. The domain name is also part of the URL

used by the clients to create Spaces, and by the Registration Server to create

new Space Depots. This URL is stored in the ServiceHostURL system setting.

The Same domain name is also used to access Hosting Administration Console Hosting Service API. The default Hosting Administration Console URL is: https://tdhostserver.yourdomain.com/admin/

Note

Note that it is not possible to change the domain name of a Host Server, once the TeamDrive Clients have contacted it to create and access Spaces — the location of Spaces is tied to the Host Server’s host name. However, it is possible to change a Host Server’s IP address, if required.

Directory Structure of Hosted Data¶

The directory structure for space data stored on local storage is as follows:

spacedata

`-- vol01

|-- 1

| |-- protolog

| | |-- last.log

| | |-- last.log.lock

| | `-- 0.log

| `-- data

| |-- D41D8CD98F00B204E9800998ECF8427E

| |-- 7D0F97FC38AE3B2666435D03AA91F352

| `-- 253F19AA30D5346662B3EA83CF79F0D7

`-- 2

|-- data

| |-- 5ACDD4Z000004004U8RGKHSZM2592M8H

| |-- F3XG47Z000004004U8RG1214Z2592M80

| `-- NYFBTSZ000004004U8RFT7Q8A2592M7Y

|-- protolog

| |-- last.log

| `-- last.log.lock

|-- public

| `-- 8CN7S0800000A004UH0Q9TP323BBNZ8E

| `-- Familypicture.jpg

`-- snapshot

|-- last.log

`-- last.log.lock

When Spaces are created, they are evenly distributed across individual

volumes, based on the relative disk space utilization ratio of each available

volume. A Space is identified in the file system by its unique database ID.

The TeamDrive Clients store the data for a Space separated according to

metadata (protolog-directory) and contents (data-directory).

Metadata is appended to a log file and reflects the history of the Space by storing all events (invitations of users, creation of directories, files and all modifications, etc.). All data stored on the Hosting Service is encrypted and only the TeamDrive Clients can decrypt it. It is not possible to read the original space data in the log.

New data is continually added to the data directory in each Space

directory. Existing data is never overwritten, with the exception of data that

has not been uploaded fully and where the upload may restart. File names are

created using a Global Unique ID algorithm in the TeamDrive Clients that

prevents two different clients from creating the same name. When permanently

deleting files (e.g. when emptying the recycle bin of a Space), these files are

deleted on the server, to free up storage space.

The last.log.lock file in each Space is used internally for providing a

reliable locking mechanism to prevent multiple clients from appending data to

the last.log file at the same time. Hence, the underlying storage or file

system needs to support proper file locking (the mod_space Apache module

depends on flock(LOCK_EX) to be reliable).

The public folder contains unencrypted files that have been published

(uploaded) by the TeamDrive Clients. Published files are read-accessible via

HTTP or HTTPS (depending on the server configuration) by anybody, including

users who do not have a TeamDrive Client installed. A TeamDrive Professional

Client license is required to publish files.

Finally, versions 3.2.0 or later of the TeamDrive client support a so-called

“Snapshot” feature, which cuts down the time it takes to enter a Space

considerably. The information required to implement this functionality is

stored in the snapshot subdirectory of a Space.

Spaces, Owners, and Depots¶

All Spaces created on a host are allocated to a specific Space Depot. A Space Depot has a storage quota and traffic limit. TeamDrive Client users require the access information of a Depot in order to create a Space.

If enabled, the TeamDrive Registration Server creates the necessary Depot (called the default Depot) required by the TeamDrive Client during registration of a client. For this purpose the TeamDrive Registration Server must have API access to the Hosting Service.

After the Depot has been created on the Hosting Service, the access information is returned to the TeamDrive Client via the Registration Server. The default Depot is linked to the registration of the TeamDrive Client, and cannot be used by any other user.

The Space Owner and Space information is recorded when a Space is created using the TeamDrive Client.

In addition to the default Depot, additional Depots can also be created manually via the Registration Server’s and the Host Server’s Administration Console. See chapter Manually creating a Depot in the Host Server Administration Guide for details.

Background Tasks Performed by td-hostserver¶

The td-hostserver process is a service running on a Host Server instance that

executes background tasks scheduled by the Hosting Service. It uses the Yvva

daemon yvvad to run the tasks at regular intervals.

How to start start the td-hostserver process is described in the

section: Starting td-hostserver.

A complete description of the tasks performed by td-hostserver is

provided in the chapter on Hosting Service Management:

Managing Auto Tasks.