Setting up an Amazon S3/Azure BLOB Storage/Ceph Object Storage-Compatible Object Store¶

Installation and configuration of the TeamDrive Daemon s3d is only

required if TSHS (see TeamDrive Scalable Hosting Storage for details) is not enabled and you have an

Amazon S3/Azure BLOB Storage/Ceph Object Storage compatible object store you

wish to use as a secondary storage tier.

Currently, Amazon S3, OpenStack Swift, Ceph Object Gateway (version 0.8 or higher, using the S3-compatible API) or Azure BLOB Storage are supported.

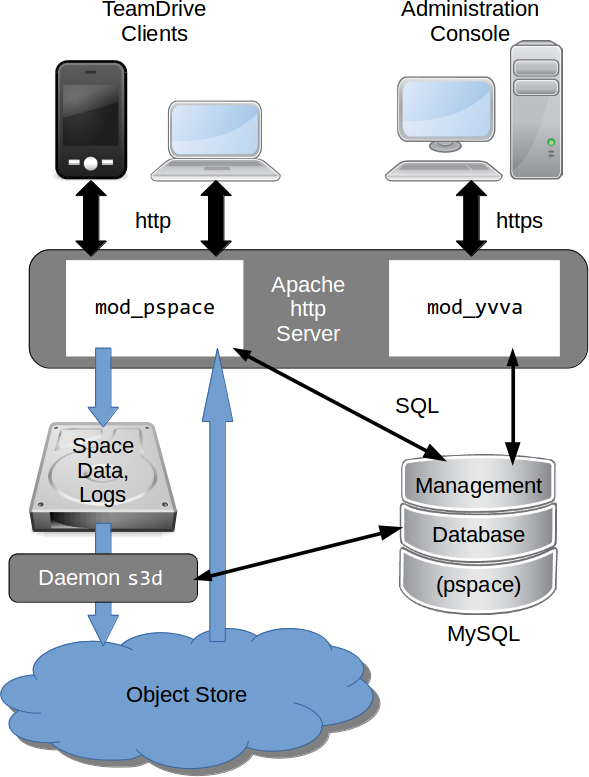

TeamDrive Hosting Service using an object store

s3d is a process that runs in the background and provides secondary

storage by transferring files to the object store. It does this by monitoring

the hosted data directory structure and transferring files to the object store

when a file reaches an age as specified in the service’s configuration. The

configuration settings also allows you to specify what files are eligible to

be transferred via the use of pattern matching.

Note

Because all object store requests will be signed using the current timestamp,

it’s essential that the system time is accurate when running s3d.

Make sure that the NTP service is installed and running. See the chapters

about NTP configuration in the installation guides for details.

Configuring s3d¶

The configuration of s3d is performed by changing the relevant

configuration settings using the Host Server Administration Console.

Log into your Host Servers Administration Console at https://hostserver.yourdomain.com/admin/ and click on Settings –> Object Store.

The following information is needed by s3d to connect to the object

store.

S3Brand- This setting specifies the type of S3 storage. Valid options are: Amazon, OpenStack or Azure.

S3Server- Your object store’s domain name, e.g.

s3.amazonaws.comoryouraccount.blob.core.cloudapi.de. S3DataBucketName- The name of the Bucket in the object store that will contain the Space data. The bucket must already exist.

Warning

If you are setting up multiple TeamDrive Hosting servers it is important that they do not use the same Bucket. Doing so can result in data loss.

S3AccessKey- Your object store access (public) key, used to access the specified bucket.

S3SecretKey- Your object store secret (private) key used to access the specified bucket.

S3SyncActive- Set this to

Trueto enable the synchronization of data stored by the Host Server (Space data) to the specified bucket on an compatible object store. Note that the synchronization won’t start until thes3dservice has been started (see below). S3Options- These options control the way the object store is accessed, for example the

number of parallel threads during upload, whether to use multipart upload,

etc. The options may also contain

S3Brandspecific settings. S3EnableRedirect- When S3 redirect is enabled, the Host Server will redirect the Client to a

download directly from the object store, when appropriate. This helps to

offload traffic from the Host Server to the object store. If set to

False, the Host Server fetches the requested object from the object store and serves it to the Client directly.

Starting and Stopping the s3d service¶

You can use the /etc/init.d/s3d init script to start and stop s3d. The

configuration setting S3SyncActive needs to be set to True, otherwise

s3d will abort with a corresponding error message.

Warning

Enabling the s3d Daemon means that any new data in existing Spaces and new Spaces will be transferred to the object store. Currently, there is no automatic way to return back to a pure file-based Host Server setup — data that was moved to the object store stays in object store. Disabling the secondary storage tier would result in Clients no longer being able to access their Space data.

After starting s3d with the command service s3d start, check the log

file /var/log/s3d.log for startup messages. You can use service s3d

status to check if s3d is up and running.

s3d should be added to the processes to be started at boot time. To do

this execute the following commands as root:

[root@hostserver ~]# chkconfig s3d on

Optional configuration parameters¶

The following optional configuration options can be modified in the

S3Options configuration setting:

MinBlockSize- The minimum block size that can be used for multi-part upload. The valid range of this parameter will be determined by the implementation of the object store being used.

MaxUploadParts- The maximum number of parts a file can be divided into for upload. The valid range of this parameter will be determined by the implementation of the object store being used.

MaxFileUploadSize- Files larger than this will not be transferred to S3. The default, 0, means all files are uploaded.

MaxUploadThreads- The thread pool size to use for multi-part uploads. The default, 0, means single threaded.

BucketAsSubdomain- Amazon S3 uses the bucket name as part of the domain name

(

BucketAsSubdomain=1), while other object stores (e.g. Azure or OpenStack) include the bucket name as part of the path name after the domain (BucketAsSubdomain=0). This option usually does not have to be set explicitly, as theS3Brandsetting determines this value automatically. MaxBandWidth- The S3 Daemon could be limited to avoid bandwidth conflicts with the clients. The value you have to enter is the used bandwidth in MB/s. The default, 0, means no limitation.

OpenStack configuration parameters¶

If the S3Brand is set to OpenStack then 2 additional parameters are

required in S3Options, to enable the generation of temporary URLs.

Temporary URLs give clients temporary direct access to objects in the object

store, helping to reduce network traffic on the Host Server. This requires the

setting S3EnableRedirect to be set to True.

OpenStackAuthPath- This is the path component of the OpenStack Authorization URL. For example

if the OpenStack Authorization URL is

https://swift-cluster.example.com/v1/AUTH_a422b2-91f3-2f46-74b7-d7c9e8958f5d30

then the

OpenStackAuthPathwould be /v1/AUTH_a422b2-91f3-2f46-74b7-d7c9e8958f5d30. OpenStackAuthKey- Your OpenStack temp URL key used to generate temporary URLs.

Your OpenStack administrator should be able to provide you with the OpenStack Authorization URL and the corresponding temp URL key.

Warning

The generation of new temp URL keys will invalidate older keys. It is important that once the temp URL key has been set for your TeamDrive Hosting server that no new keys are being generated.

Enabling Object Store Traffic Usage Processing¶

When S3 storage has been enabled, TeamDrive Clients access the data in the object store directly. The traffic required by these operations is recorded by reading the object store access log files.

This means that setting S3SyncActive to True changes the way Traffic

usage is calculated. When S3SyncActive to False, the Hosting Service

is able to record all traffic usage in the pspace database. When

S3SyncActive to True the object store access logs myst be downloaded

and parsed to get the required information.

Note

At present, only Amazon S3 and Azure BLOB Storage supports the concept of providing access log files via a dedicated log bucket (S3) or log folder (Azure). If your object store doesn’t support providing access logs, leave the following four settings empty. The host server will calculate the file size of each returned redirect URL to the external object store as traffic for this space. This might be not exact, because a client might send a download request for the same file again in case that the download was not complete. The download will then start at the offset where the former download stopped. A new redirect will not result in a full file download in this case. In case your object store offers access logs, the traffic usage processing expects the Amazon S3 / Azure BLOB Storage access log format. See S3 Server Access Logging in the Amazon S3 documentation for details or Azure Storage Logging on how to enable server access logging.

In addition, the following configuration settings have to be defined:

- S3LogBucketName:

- Name of the bucket (S3) / $logs-folder (Azure) that contains the access logs. Note that if this setting is empty, the object store traffic will not be calculated. You must configure your object store to save the access logs to this bucket / $logs-folder. Please refer to your object store documentation to determine how this is done.

Note

The bucket used for the log files must be a dedicated bucket. This is because the script that processes the files download everything assuming the bucket only contains log files.

- S3ToProcessPath:

- Local path in the filesystem to download above access logs for further

processing. By default, this path is set to

/var/opt/teamdrive/td-hostserver/s3-logs-incoming/ - S3ArchiveLogs:

- If set to

True, the processed access logs will be moved to the folder defined in the next settingS3ProcessedPath. - S3ProcessedPath:

- If logs need to be kept for own additional analysing, then the access log files will be moved to this directory once traffic usage Processing is complete.

By default, the task Process S3 Logs that calculates the traffic runs

every 10 minutes.